The Way to Smart Manufacturing

Author(s)

Chang-Shing Lee, Mei-Hui Wang, Naoyuki Kubota, & Li-Wei KoBiography

Chang-Shing Lee (IEEE Senior Member) received the Ph.D. degree in Computer Science and Information Engineering from the National Cheng Kung University, Taiwan, in 1998. He is currently a Professor with the Department of Computer Science and Information Engineering, National University of Tainan (NUTN), Taiwan.

Mei-Hui Wang received M.S. degree in electrical engineering from the Yuan Ze University, Taiwan, in 1995. She is currently a Researcher with the OASE Lab., National University of Tainan, Taiwan. Naoyuki Kubota received the D. E. degree from Nagoya University, Nagoya, Japan in 1997. Currently, he is a professor in the Dept. of System Design, Tokyo Metropolitan University, Tokyo, Japan. Li-Wei Ko received the Ph.D. degree in electrical engineering from National Chiao Tung University, Taiwan in 2007. Currently, he is an associate professor with the Institute of Bioinformatics and Systems Biology, National Chiao Tung University, Taiwan.Academy/University/Organization

National University of TainanSource

(1) C. S. Lee, M. H. Wang, L. W. Ko, Y. Hsiu Lee, H. Ohashi, N. Kubota, Y. Nojima, and S. F. Su, “Human Intelligence Meets Smart Machine at IEEE SMC 2018,” IEEE Systems, Man, and Cybernetics Magazine, 2019 (Accepted and will be published in 2020). (DOI: 10.1109/MSMC.2019.2948050)

(2) C. S. Lee, M. H. Wang, Y. L. Tsai, L. W. Ko, B. Y. Tsai, P. H. Hung, L. A. Lin, and N. Kubota, “Intelligent agent for real-world applications on robotic edutainment and humanized co-learning,” Journal of Ambient Intelligence and Humanized Computing, 2019.

https://link.springer.com/article/10.1007/s12652-019-01454-4-

TAGS

-

Share this article

You are free to share this article under the Attribution 4.0 International license

- ENGINEERING & TECHNOLOGIES

- Text & Image

- December 20,2019

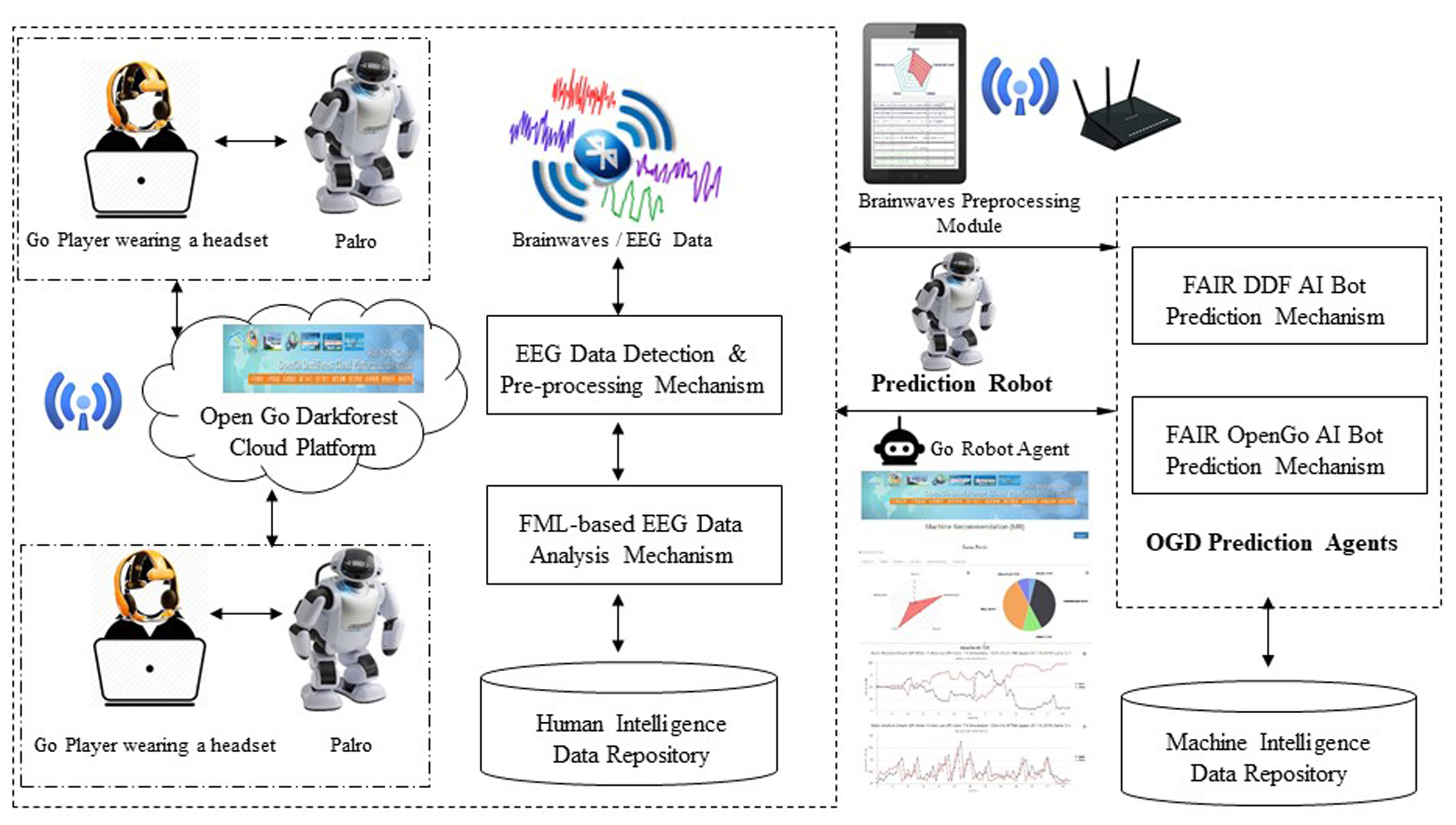

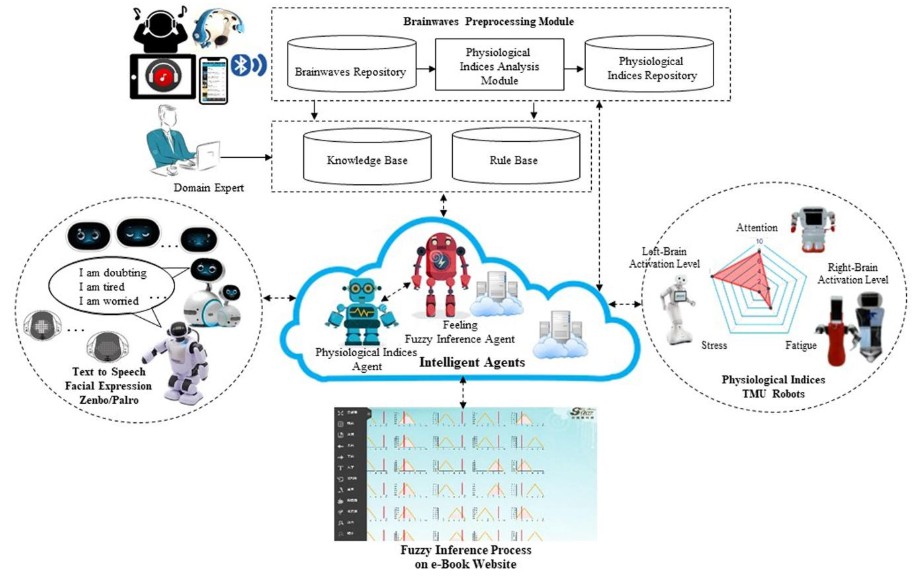

Dynamic assessment with an intelligent agent can differentiate the capabilities and proficiency of students. It can therefore be advocated as an interactive approach to conduct assessments on students in learning systems. Recently, researchers have been making efforts to develop and explore a model of an intelligent robot teacher that can learn with humans. We adopt a commercial EEG device with eight channels to collect EEG signals from a subject when he/she is learning or listening to music. The proposed wearable BCI system monitors the physiological states of a subject to infer his/her real-time emotions and then communicate these emotions to the robot Zenbo Junior or robot Kebbi Air. Our aim is to observe the relationship between his/her physiological indicators, including attention, left-brain activation, right-brain activation, stress, fatigue, and his/her real-time emotions. The main contributions of this research are the insights into how machines and humans can co-learn, which will be useful for future educational applications. More than 350 students have been co-learning with intelligent robot teachers, for example, robot Zenbo/ Zenbo Junior or robot Kebbi Air or robot Palro, in Tainan, Kaohsiung, Taipei, and Tokyo from 2018 to 2019. The learning performance and feedback from students and teachers have been extremely positive, especially from remedial students. The experiments also show that there exists a relationship between the physiological indices and the learners’ culture, familiarity, and preference for the songs they listen to.

Nowadays, various types of robots have been applied to the fields of education combined with entertainment (Edutainment). Human-robot cooperation is playing an important role in the development of industrialization in the age of artificial intelligence (AI). In the developed human and smart machine co-learning model, the robot teachers can assist human teachers in repeatedly explaining concepts to the students and doing daily repeated and routine jobs. On the other hand, teachers have more time to take care of slow learners because AI robot teachers can assist teachers by explaining concepts repeatedly to students without feeling tired or becoming impatient. An intelligent agent for real-world applications in the field of robotic edutainment and humanized co-learning is presented in this article. Two main applications are focused upon: (1) English language learning: The robot teachers were welcomed by most of the students. The experimental results showed that low-ability students made the most progress and they also re-built their confidence and interest in learning English. (2) Listening to music for entertainment: The real-time feelings of a man/woman who is singing or listening to a song were inferred according to the developed TSK-based fuzzy inference mechanism. Moreover, we combined this with the robot Zenbo Junior or robot Kebbi Air to reflect the human’s feelings on the face of the robot.

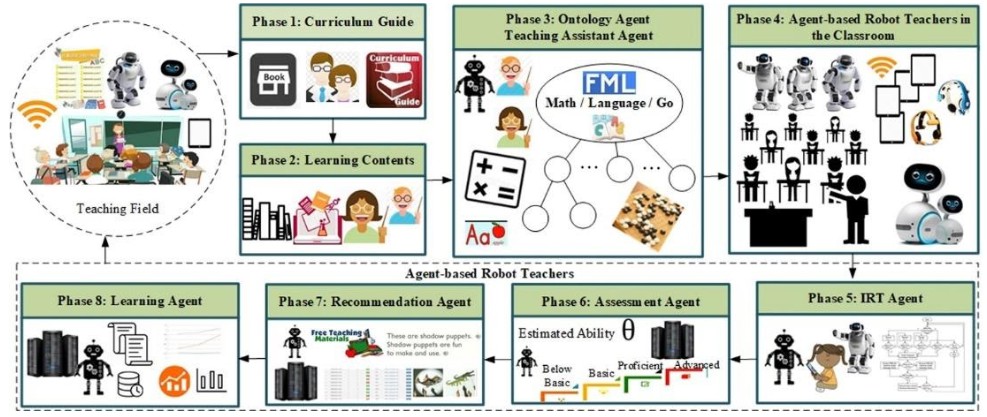

Fig. 1 shows the ontology-based future educational ecosystem for agent-based robot teachers that can learn various domains of knowledge such as mathematics, languages, e.g. English, and fuzzy markup language (FML), and the Go game domain along with students in the classroom. It operates as follows: (1) Phases 1 and 2: Domain experts and senior teachers define the curriculum guide for each subject. Publishers then invite human experts to plan and set out the course outlines by following the curriculum guide. The publishers then invite other human experts to create learning content. (2) Phase 3: The ontology agent and the teaching assistant agent construct the domain ontology for the robot teachers’ learning. (3) Phase 4: The proposed model is introduced into the teaching field to allow the robot teachers and students to learn together in classrooms based on the adaptive learning schedule. The robots Zenbo, Zenbo Junior or Kebbi Air serve as teaching assistants who interact with students in the classroom to stimulate their learning motivation and help them make progress. (4) Phases 5 and 6: Each student in the classroom uses a handheld device to make a response on the test paper. The robot teachers Zenbo, Zenbo Junior or Kebbi Air select suitable items for students based on their real-time responses, and assess each student’s level of ability. (5) Phases 7 and 8: The specific data are then collected and analyzed by the established platform in Taiwan and Japan. Robot teachers offer individual feedback to students by selecting suitable teaching materials for their next session of adaptive learning. The robot teachers assess each student’s actual ability for the next teaching and learning session as well as organizing the learning process based on their learning history. All of the analyzed results are fed back to the teaching field to refine the robot teachers in the classroom further.

Fig. 1. Ontology-based future educational ecosystem for agent-based robot teachers.

Fig. 2. Structure of intelligent agents on robotic edutainment.

STAY CONNECTED. SUBSCRIBE TO OUR NEWSLETTER.

Add your information below to receive daily updates.